Probabilistic Occlusion Culling using Confidence Maps for High-Quality Rendering of Large Particle Data

Mohamed Ibrahim, Peter Rautek, Guido Reina, Marco Agus, Markus Hadwiger

External link (DOI)

View presentation:2021-10-28T15:45:00ZGMT-0600Change your timezone on the schedule page

2021-10-28T15:45:00Z

Fast forward

Direct link to video on YouTube: https://youtu.be/zMbAIqrnRj0

Abstract

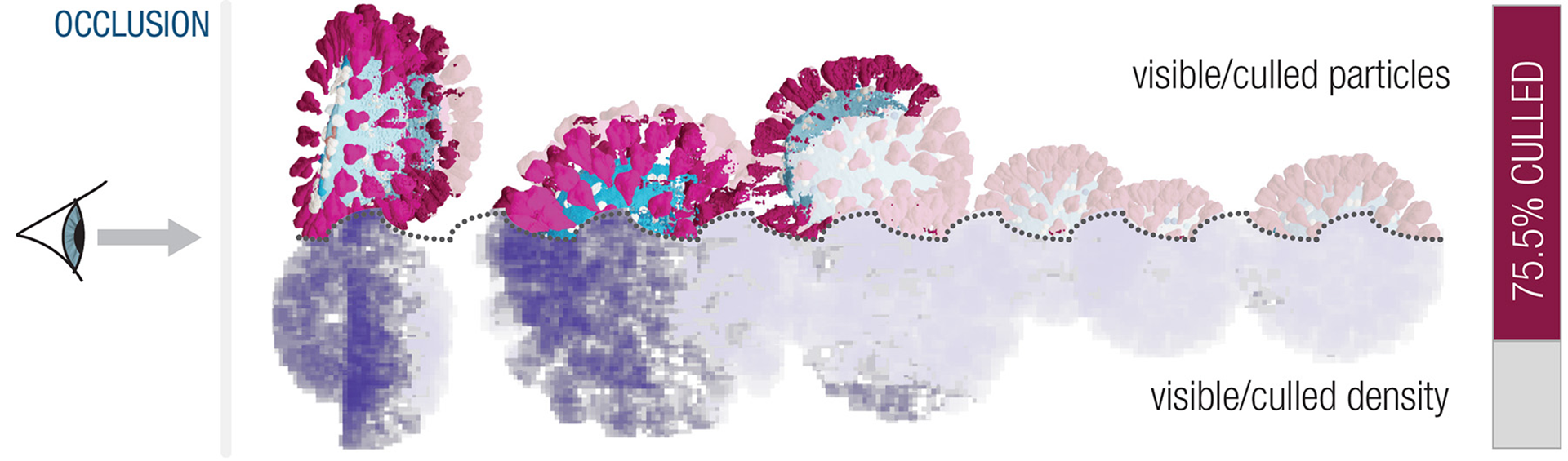

Achieving high rendering quality in the visualization of large particle data, for example from large-scale molecular dynamics simulations, requires a significant amount of sub-pixel super-sampling, due to very high numbers of particles per pixel. Although it is impossible to super-sample all particles of large-scale data at interactive rates, efficient occlusion culling can decouple the overall data size from a high effective sampling rate of visible particles. However, while the latter is essential for domain scientists to be able to see important data features, performing occlusion culling by sampling or sorting the data is usually slow or error-prone due to visibility estimates of insufficient quality. We present a novel probabilistic culling architecture for super-sampled high-quality rendering of large particle data. Occlusion is dynamically determined at the sub-pixel level, without explicit visibility sorting or data simplification. We introduce confidence maps to probabilistically estimate confidence in the visibility data gathered so far. This enables progressive, confidence-based culling, helping to avoid wrong visibility decisions. In this way, we determine particle visibility with high accuracy, although only a small part of the data set is sampled. This enables extensive super-sampling of (partially) visible particles for high rendering quality, at a fraction of the cost of sampling all particles. For real-time performance with millions of particles, we exploit novel features of recent GPU architectures to group particles into two hierarchy levels, combining fine-grained culling with high frame rates.