Learning Adaptive Sampling and Reconstruction for Volume Visualization

Sebastian Weiss, Mustafa Isik, Justus Thies, Rüdiger Westermann

External link (DOI)

View presentation:2021-10-27T13:15:00ZGMT-0600Change your timezone on the schedule page

2021-10-27T13:15:00Z

Fast forward

Direct link to video on YouTube: https://youtu.be/UYobDzRMv8Y

Keywords

Volume visualization, adaptive sampling, deep learning

Abstract

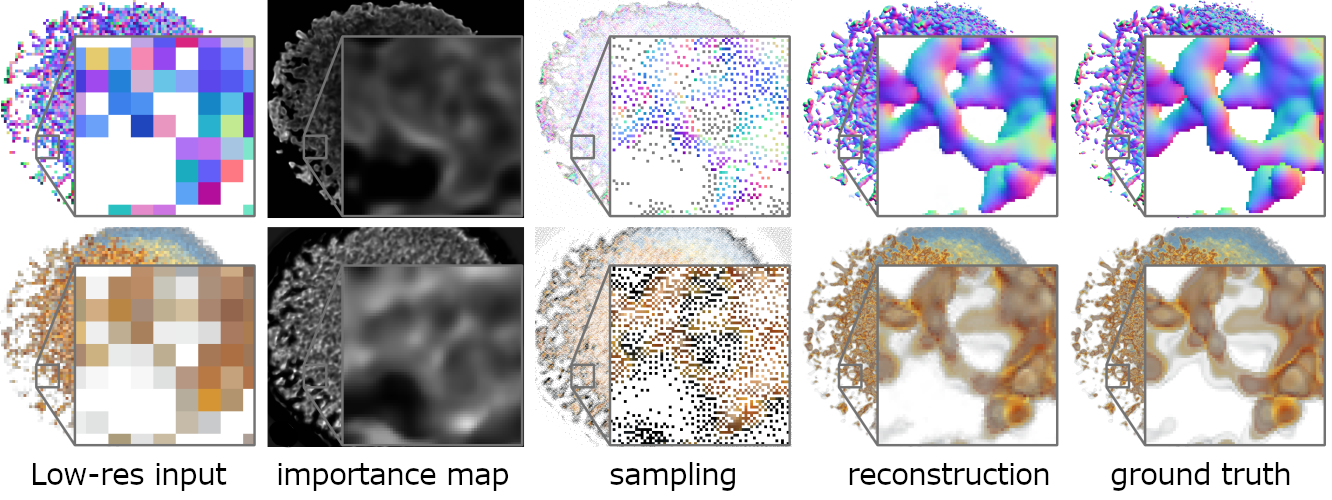

A central challenge in data visualization is to understand which data samples are required to generate an image of a data set in which the relevant information is encoded. In this work, we make a first step towards answering the question of whether an artificial neural network can predict where to sample the data with higher or lower density, by learning of correspondences between the data, the sampling patterns and the generated images. We introduce a novel neural rendering pipeline, which is trained end-to-end to generate a sparse adaptive sampling structure from a given low-resolution input image, and reconstructs a high-resolution image from the sparse set of samples. For the first time, to the best of our knowledge, we demonstrate that the selection of structures that are relevant for the final visual representation can be jointly learned together with the reconstruction of this representation from these structures. Therefore, we introduce differentiable sampling and reconstruction stages, which can leverage back-propagation based on supervised losses solely on the final image. We shed light on the adaptive sampling patterns generated by the network pipeline and analyze its use for volume visualization including isosurface and direct volume rendering.