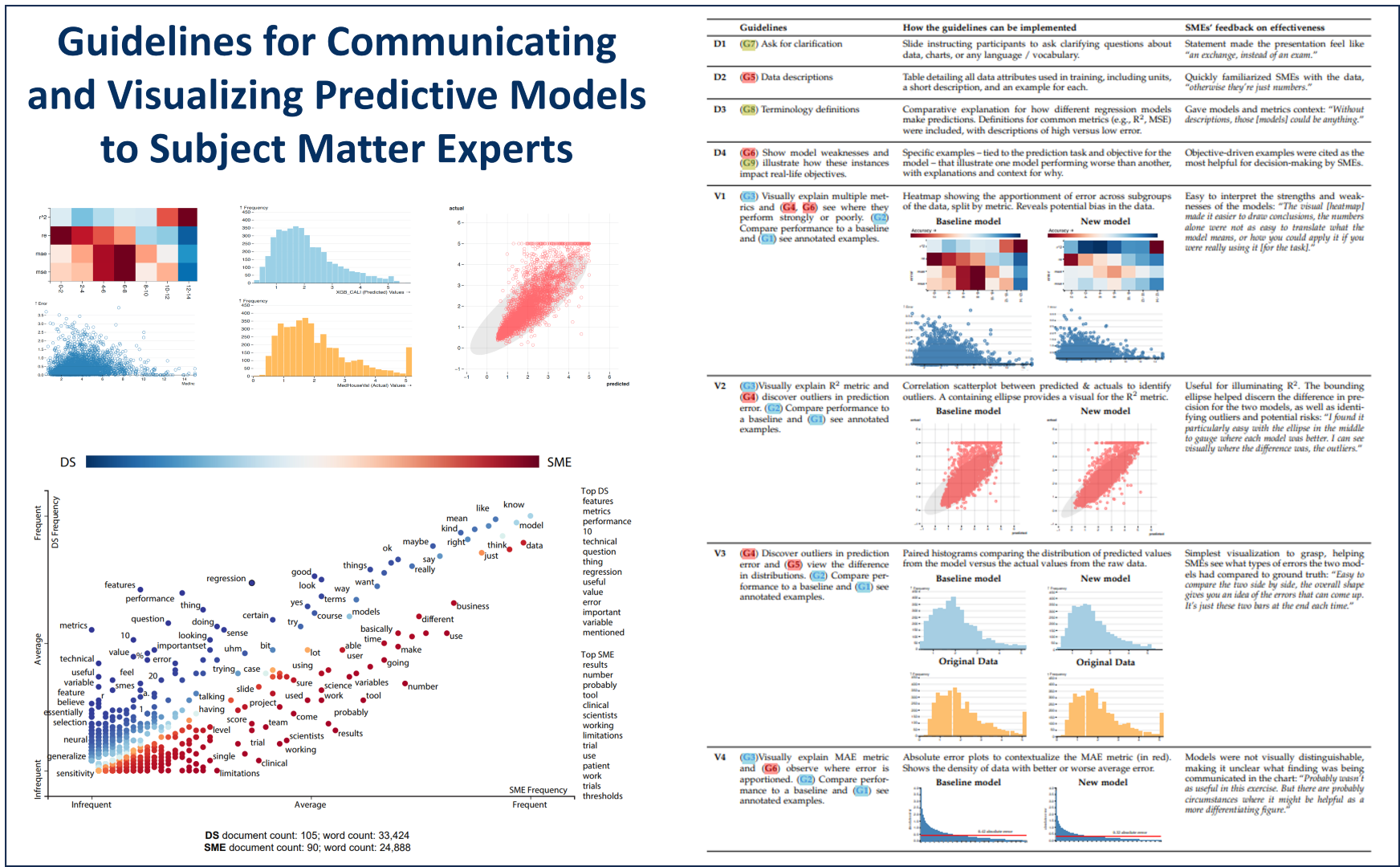

Are Metrics Enough? Guidelines for Communicating and Visualizing Predictive Models to Subject Matter Experts

Ashley Suh, Gabriel Appleby, Erik W. Anderson, Luca Finelli, Remco Chang, Dylan Cashman

DOI: 10.1109/TVCG.2023.3259341

Room: 109

2023-10-24T22:00:00ZGMT-0600Change your timezone on the schedule page

2023-10-24T22:00:00Z

Fast forward

Full Video

Keywords

Visualization techniques and methodologies;Human factors;Modeling and prediction;Data communications aspects

Abstract

Presenting a predictive model's performance is a communication bottleneck that threatens collaborations between data scientists and subject matter experts. Accuracy and error metrics alone fail to tell the whole story of a model – its risks, strengths, and limitations – making it difficult for subject matter experts to feel confident in their decision to use a model. As a result, models may fail in unexpected ways or go entirely unused, as subject matter experts disregard poorly presented models in favor of familiar, yet arguably substandard methods. In this paper, we describe an iterative study conducted with both subject matter experts and data scientists to understand the gaps in communication between these two groups. We find that, while the two groups share common goals of understanding the data and predictions of the model, friction can stem from unfamiliar terms, metrics, and visualizations – limiting the transfer of knowledge to SMEs and discouraging clarifying questions being asked during presentations. Based on our findings, we derive a set of communication guidelines that use visualization as a common medium for communicating the strengths and weaknesses of a model. We provide a demonstration of our guidelines in a regression modeling scenario and elicit feedback on their use from subject matter experts. From our demonstration, subject matter experts were more comfortable discussing a model's performance, more aware of the trade-offs for the presented model, and better equipped to assess the model's risks – ultimately informing and contextualizing the model's use beyond text and numbers.