SliceTeller : A Data Slice-Driven Approach for Machine Learning Model Validation

Xiaoyu Zhang, Jorge H Piazentin Ono, Huan Song, Liang Gou, Kwan-Liu Ma, Liu Ren

View presentation:2022-10-20T16:21:00ZGMT-0600Change your timezone on the schedule page

2022-10-20T16:21:00Z

Prerecorded Talk

The live footage of the talk, including the Q&A, can be viewed on the session page, Interpreting Machine Learning.

Fast forward

Abstract

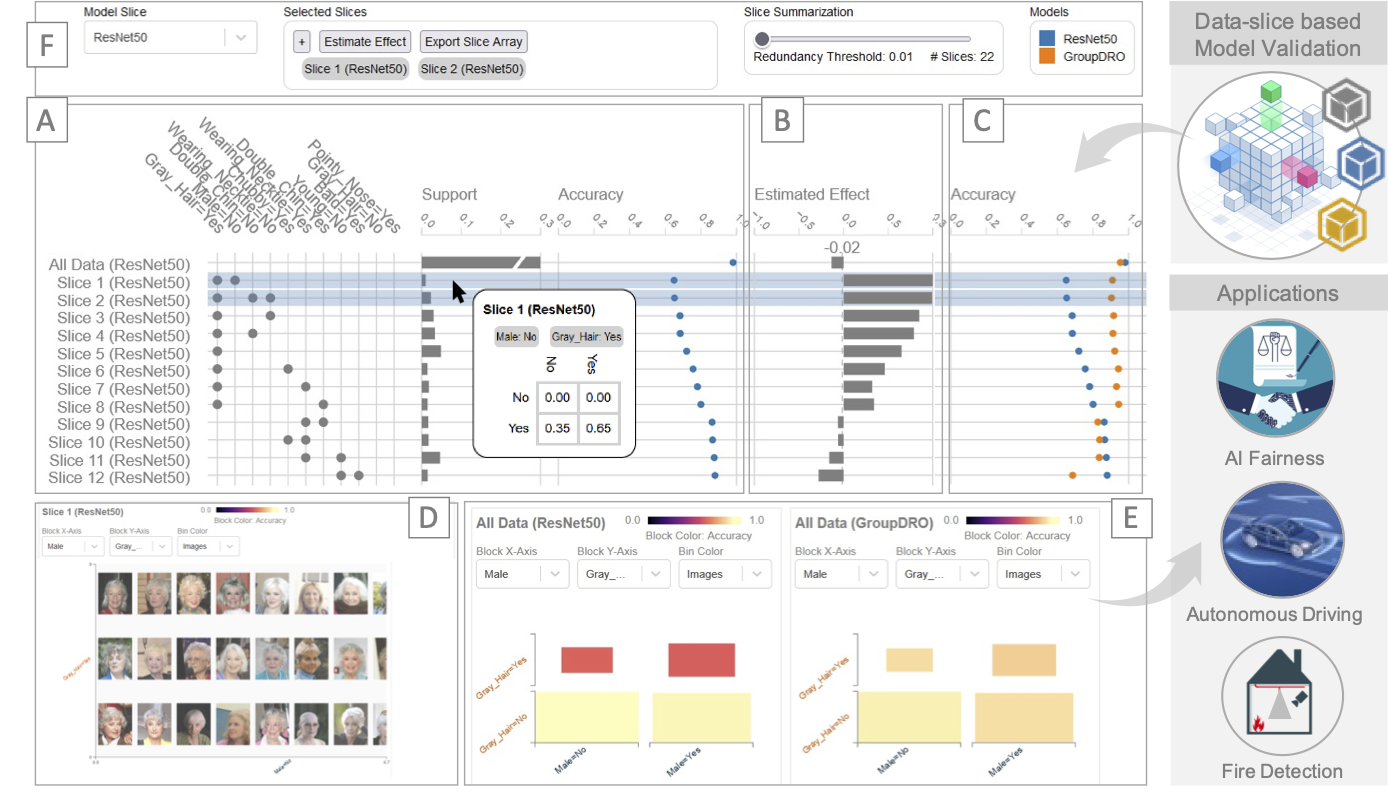

Real-world machine learning applications need to be thoroughly evaluated to meet critical product requirements for model release, to ensure fairness for different groups or individuals, and to achieve a consistent performance in various scenarios. For example, in autonomous driving, an object classification model should achieve high detection rates under different conditions of weather, distance, etc. Similarly, in the financial setting, credit-scoring models must not discriminate against minority groups. These conditions or groups are called as “Data Slices”. In product MLOps cycles, product developers must identify such critical data slices and adapt models to mitigate data slice problems. Discovering where models fail, understanding why they fail, and mitigating these problems, are therefore essential tasks in the MLOps life-cycle. In this paper, we present SliceTeller, a novel tool that allows users to debug, compare and improve machine learning models driven by critical data slices. SliceTeller automatically discovers problematic slices in the data, helps the user understand why models fail. More importantly, we present an efficient algorithm, SliceBoosting, to estimate trade-offs when prioritizing the optimization over certain slices. Furthermore, our system empowers model developers to compare and analyze different model versions during model iterations, allowing them to choose the model version best suitable for their applications. We evaluate our system with three use cases, including two real-world use cases of product development, to demonstrate the power of SliceTeller in the debugging and improvement of product-quality ML models.