Extending the Nested Model for User-Centric XAI: A Design Study on GNN-based Drug Repurposing

Qianwen Wang, Kexin Huang, Payal Chandak, Marinka Zitnik, Nils Gehlenborg

View presentation:2022-10-21T15:00:00ZGMT-0600Change your timezone on the schedule page

2022-10-21T15:00:00Z

Prerecorded Talk

The live footage of the talk, including the Q&A, can be viewed on the session page, Visual Analytics of Health Data.

Fast forward

Abstract

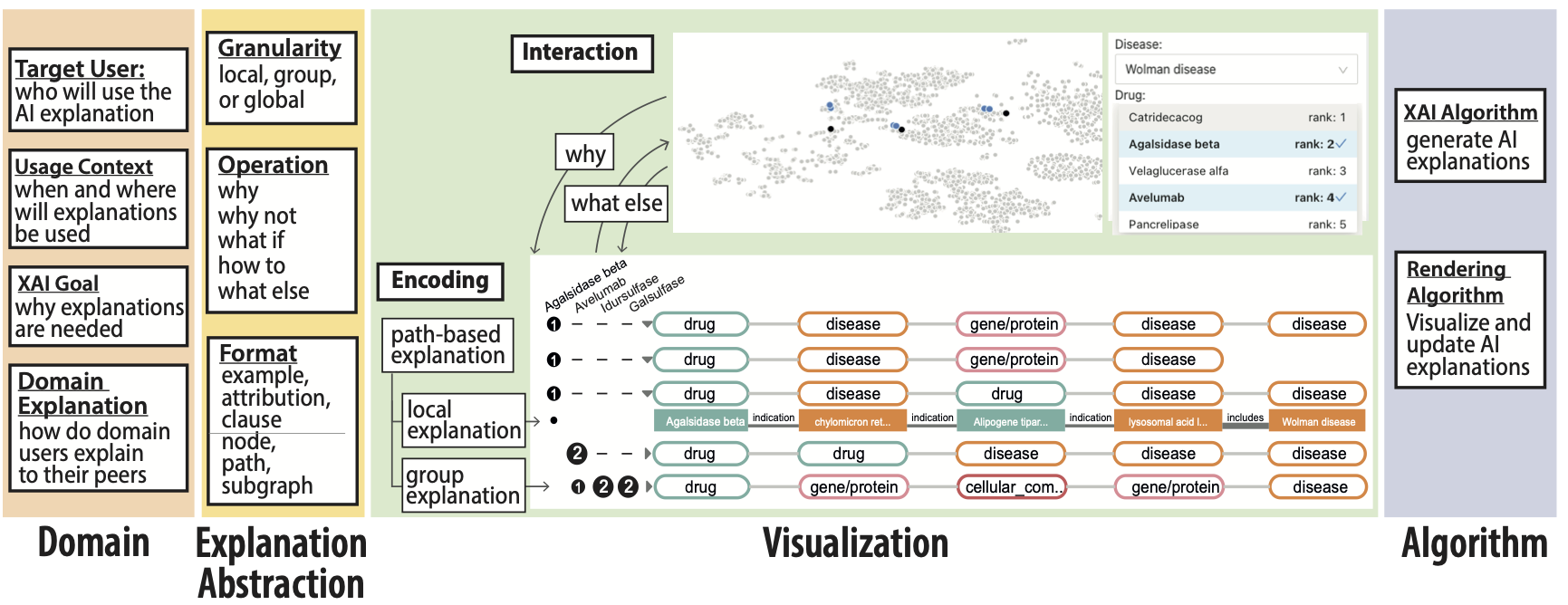

Whether AI explanations can help users achieve specific tasks efficiently (i.e., usable explanations) is significantly influenced by their visual presentation. While many techniques exist to generate explanations, it remains unclear how to select and visually present AI explanations based on the characteristics of domain users. This paper aims to understand this question through a multidisciplinary design study for a specific problem: explaining graph neural network (GNN) predictions to domain experts in drug repurposing, i.e., reuse of existing drugs for new diseases. Building on the nested design model of visualization, we incorporate XAI design considerations from a literature review and from our collaborators’ feedback into the design process. Specifically, we discuss XAI-related design considerations for usable visual explanations at each design layer: target user, usage context, domain explanation, and XAI goal at the domain layer; format, granularity, and operation of explanations at the abstraction layer; encodings and interactions at the visualization layer; and XAI and rendering algorithm at the algorithm layer. We present how the extended nested model motivates and informs the design of DrugExplorer, an XAI tool for drug repurposing. Based on our domain characterization, DrugExplorer provides path-based explanations and presents them both as individual paths and meta-paths for two key XAI operations, why and what else. DrugExplorer offers a novel visualization design called MetaMatrix with a set of interactions to help domain users organize and compare explanation paths at different levels of granularity to generate domain meaningful insights. We demonstrate the effectiveness of the selected visual presentation and DrugExplorer as a whole via a usage scenario, a user study, and expert interviews. From these evaluations, we derive insightful observations and reflections that can inform the design of XAI visualizations for other scientific applications.