Visual Auditor: Interactive Visualization for Detection and Summarization of Model Biases

David Munechika, Zijie J. Wang, Jack Reidy, Josh Rubin, Krishna Gade, Krishnaram Kenthapadi, Duen Horng Chau

View presentation:2022-10-19T20:45:00ZGMT-0600Change your timezone on the schedule page

2022-10-19T20:45:00Z

Prerecorded Talk

The live footage of the talk, including the Q&A, can be viewed on the session page, Visual Analytics, Decision Support, and Machine Learning.

Fast forward

Keywords

Machine Learning, Statistics, Modelling, and Simulation Applications

Abstract

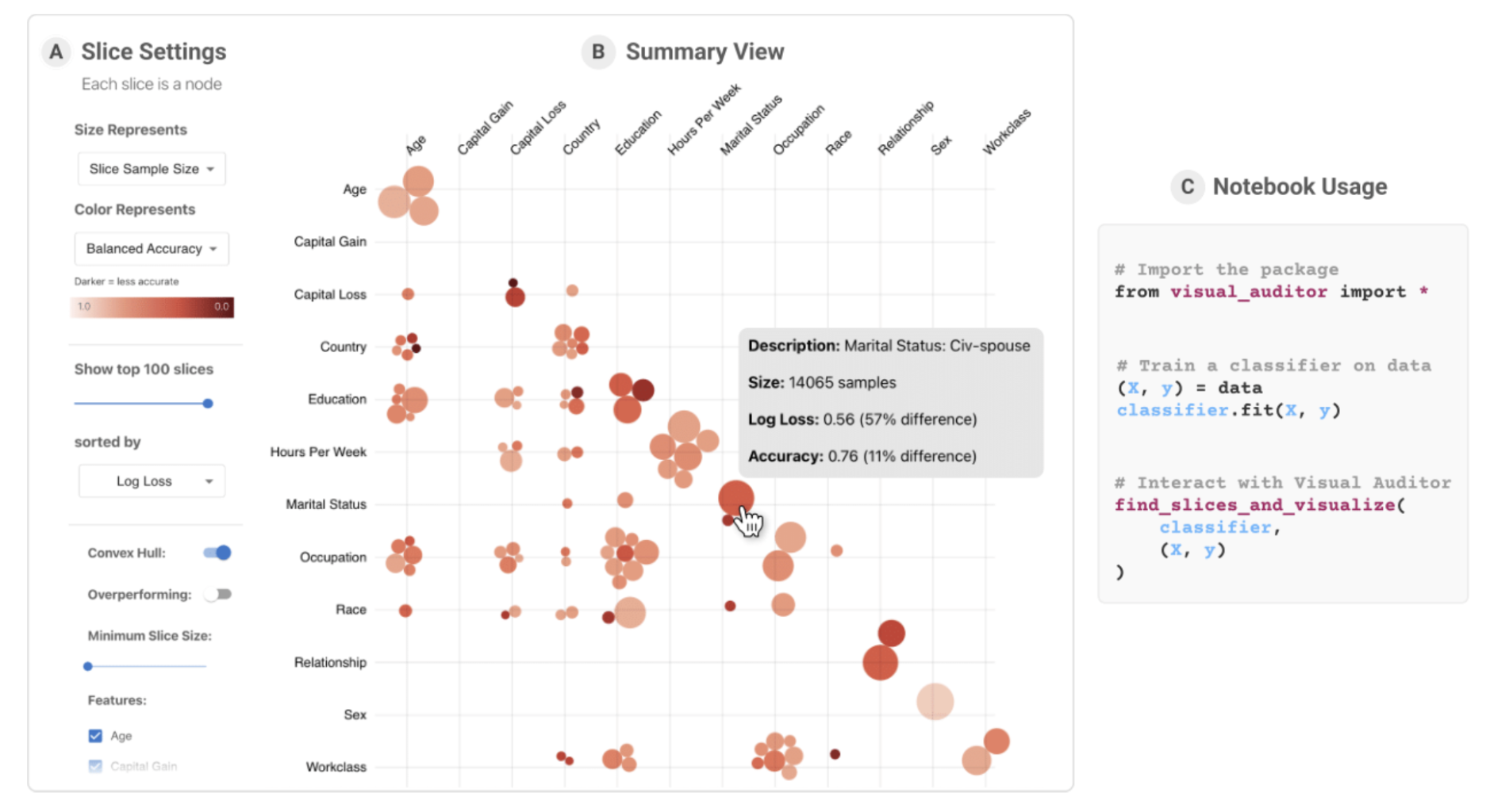

As machine learning (ML) systems become increasingly widespread, it is necessary to audit these systems for biases prior to their deployment. Recent research has developed algorithms for effectively identifying intersectional bias in the form of interpretable, underperforming subsets (or slices) of the data. However, these solutions and their insights are limited without a tool for visually understanding and interacting with the results of these algorithms. We propose Visual Auditor, an interactive visualization tool for auditing and summarizing model biases. Visual Auditor assists model validation by providing an interpretable overview of intersectional bias (bias that is present when examining populations defined by multiple features), details about relationships between problematic data slices, and a comparison between underperforming and overperforming data slices in a model. Our open-source tool runs directly in both computational notebooks and web browsers, making model auditing accessible and easily integrated into current ML development workflows. An observational user study in collaboration with domain experts at Fiddler AI highlights that our tool can help ML practitioners identify and understand model biases.