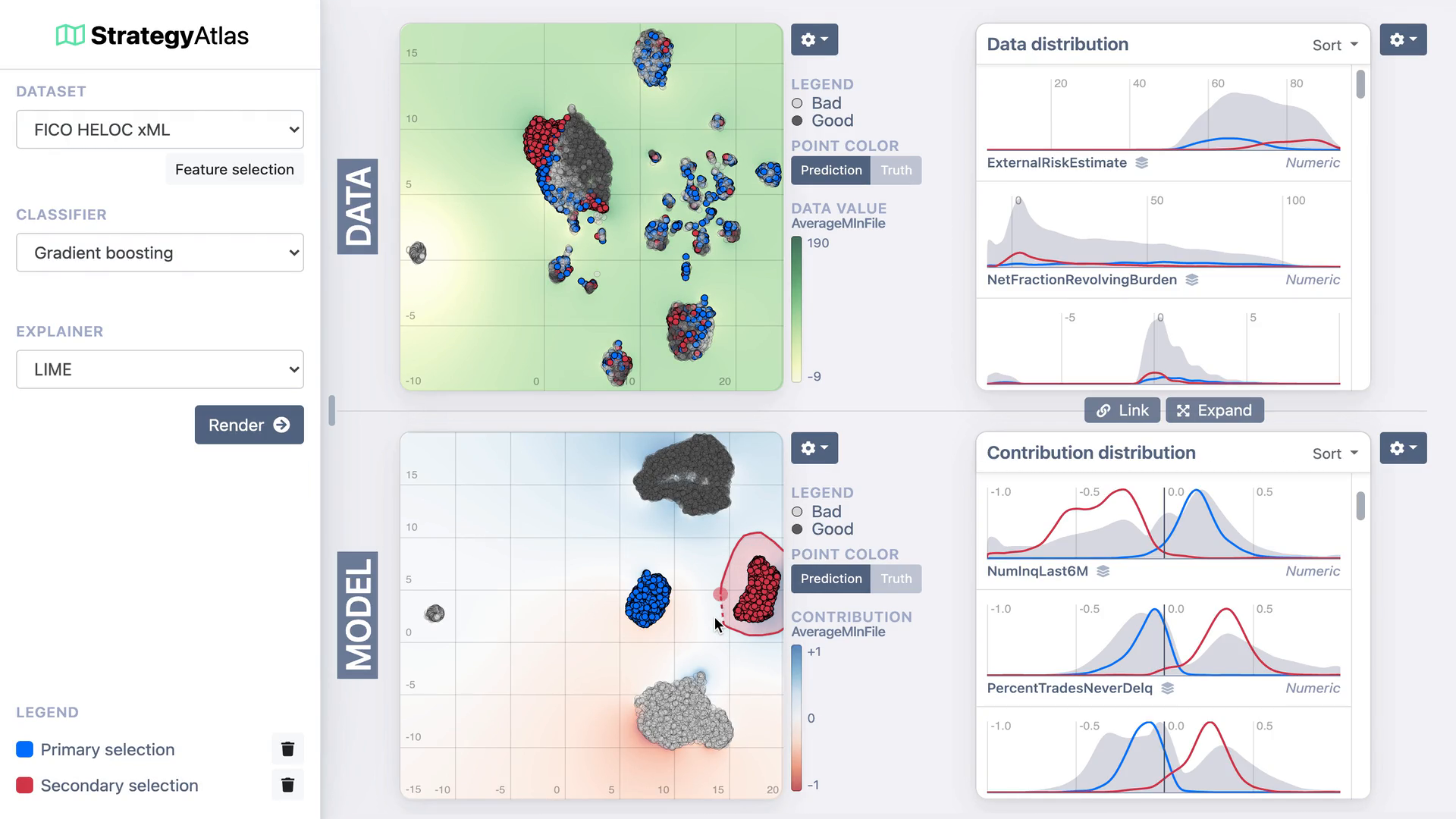

StrategyAtlas: Strategy Analysis for Machine Learning Interpretability

Dennis Collaris, Jarke J. van Wijk

View presentation:2022-10-20T15:45:00ZGMT-0600Change your timezone on the schedule page

2022-10-20T15:45:00Z

Prerecorded Talk

The live footage of the talk, including the Q&A, can be viewed on the session page, Interpreting Machine Learning.

Fast forward

Keywords

Machine learning, Visual analytics, Explainable AI

Abstract

Businesses in high-risk environments have been reluctant to adopt modern machine learning approaches due to their complex and uninterpretable nature. Most current solutions provide local, instance-level explanations, but this is insufficient for understanding the model as a whole. In this work, we show that strategy clusters (i.e., groups of data instances that are treated distinctly by the model) can be used to understand the global behavior of a complex ML model. To support effective exploration and understanding of these clusters, we introduce StrategyAtlas, a system designed to analyze and explain model strategies. Furthermore, it supports multiple ways to utilize these strategies for simplifying and improving the reference model. In collaboration with a large insurance company, we present a use case in automatic insurance acceptance, and show how professional data scientists were enabled to understand a complex model and improve the production model based on these insights.