ARShopping: In-Store Shopping Decision Support Through Augmented Reality and Immersive Visualization

Bingjie Xu, Shunan Guo, Eunyee Koh, Jane Hoffswell, Ryan Rossi, Fan Du

View presentation:2022-10-20T15:03:00ZGMT-0600Change your timezone on the schedule page

2022-10-20T15:03:00Z

Prerecorded Talk

The live footage of the talk, including the Q&A, can be viewed on the session page, Personal Visualization, Theory, Evaluation, and eXtended Reality.

Fast forward

Keywords

Human-centered computing—Visualization—Visualization systems and tools; Human-centered computing—Human computer interaction (HCI)—Interaction paradigms—Mixed / augmented reality

Abstract

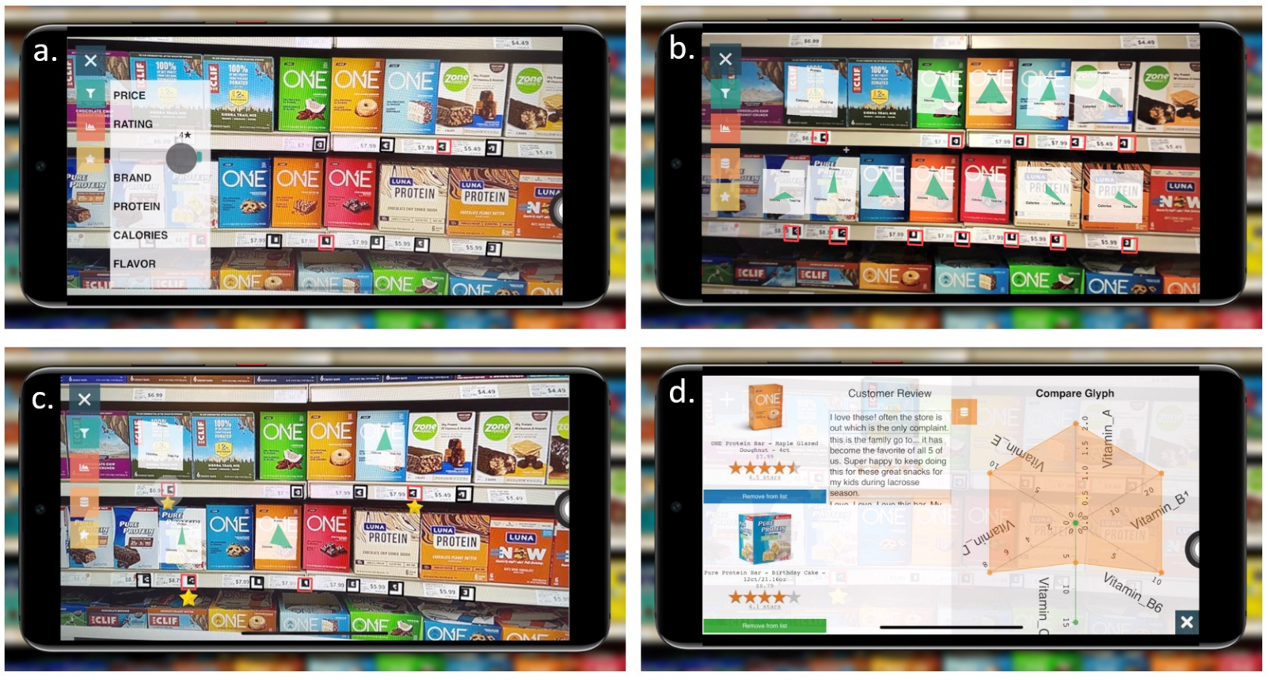

Online shopping gives customers boundless options to choose from, backed by extensive product details and customer reviews, all from the comfort of home; yet, no amount of detailed, online information can outweigh the instant gratification and hands-on understanding of a product that is provided by physical stores. However, making purchasing decisions in physical stores can be challenging due to a large number of similar alternatives and limited accessibility of the relevant product information (e.g., features, ratings, and reviews). In this work, we present ARShopping: a web-based prototype to visually communicate detailed product information from an online setting on portable smart devices (e.g., phones, tablets, glasses), within the physical space at the point of purchase. This prototype uses augmented reality (AR) to identify products and display detailed information to help consumers make purchasing decisions that fulfill their needs while decreasing the decision-making time. In particular, we use a data fusion algorithm to improve the precision of the product detection; we then integrate AR visualizations into the scene to facilitate comparisons across multiple products and features. We designed our prototype based on interviews with 14 participants to better understand the utility and ease of use of the prototype.